Categories as heuristics, pt 2

RIP DCB says: let’s hear more about categories as heuristics!

Here’s my pitch:

Heuristics and frames

Let’s define a heuristic as a tactic for perception or action which is suited to a “type” of situation.

By “perception,” I mean the descriptive apprehension and determination of what “is”; by “action” I mean the (normatively guided) response to perception.

Note that we’ve also (implicitly) invoked Schutz’s theory of types.

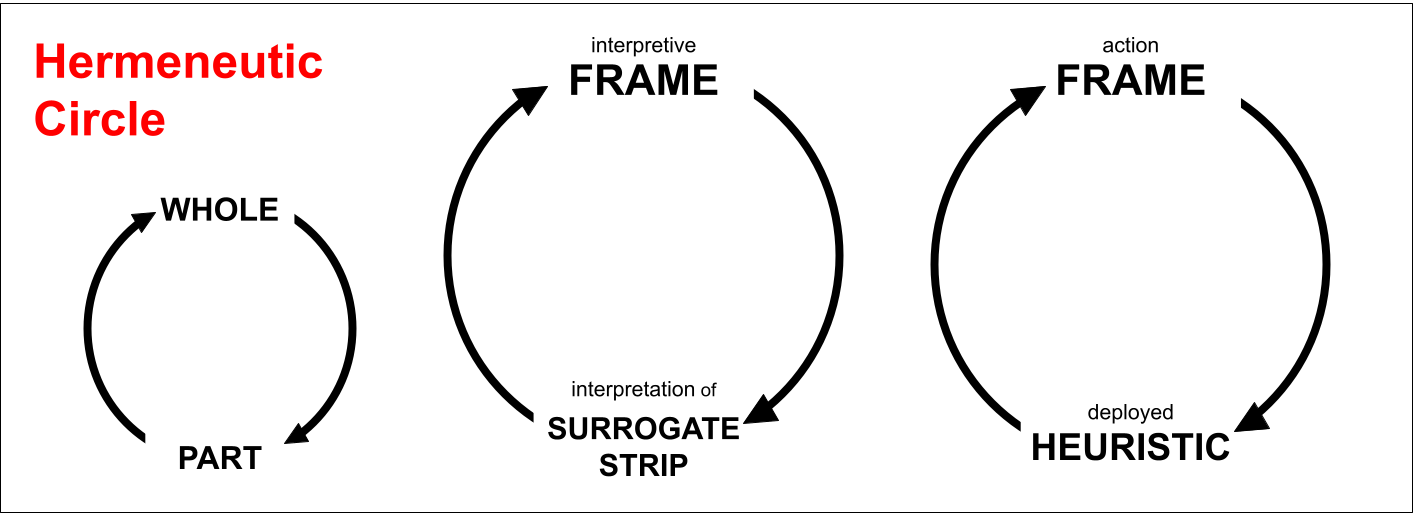

To understand heuristics, we have to understand frames (or “frameworks” or “schemas”). Frames exist outside of and are complementary to heuristics. Heuristics author our frames: our sense of what “is” is a product of the tactics we use to monitor our environment. And frames author our heuristics—are the normative force which, emerging from our perceptions, guides our actions.

The circularity problem (similar to chickens and eggs) of heuristics and frames is resolved by reference to a biological “seeding” of heuristics. Our sensory organs themselves are perceptual heuristics: environment-fitted responses to an (experienced in the past, expected in the future) probability distribution of events, which becomes less and less effective as we continue along the distribution’s far tails and into the unknown. (Into events it is not optimized over, that its model is not “aware” of.)

Our strategy (bundle of tactics) for understanding what is, or what “type” of situation we are in, is composed of gradated bottom-up processing, from the “buzzing, blooming” level of pre-shapes and colors, to the attentional and cognitive prioritization of certain facets of our sensory field (over others), to the inference performed on these especially salient facets (e.g. regarding other agents’ intentions, the social status of an interaction, and similar high-level diagnoses).

We can think of the couplings between lower-level salient perceptual aspects, and their higher-level inferential meanings, as surrogates (or “cues”).

A concept, for instance, is something like a a complex of (perceptually salient) surrogates for identification (“differences which make a difference”). The concept’s easily observable characteristics become a premise for inferring or assuming other, less observable, but pragmatically relevant properties. A poker player may develop a concept of when his opponent is bluffing, based on surrogates such as hand trembles, which allow him to infer (the more pragmatically relevant matter of) whether said opponent has a strong hand. Inevitably there will be circumstances—whether or not they come up in a given game—when the opponent’s hand trembles and yet his bet is honest (for instance, if he has recently quit drinking), or when his hand is bad but his hand steady (for instance, he is mistaken about the state or quality of his own hand).

And when (as in the action-oriented model) we speak so as to have an effect on another agent, we will employ a heuristic (“tactic”) for accomplishing this effect. (Even if the transaction, and desired effect, are as simple as greeting a friend hello, making a doctor’s appointment, or asking a woman for her phone number.) These utterances will work best in more “typical” (i.e. center-of-distribution) situations, to which the heuristic is best suited, and work least in atypical situations.

Heuristics are fundamentally associative because they link situation to tactic; they are scoped, indexical, contingent solutions—couplings of “is” and “ought.” The de-indexicalization of surrogates is sometimes called “reification.”

Heuristics in adversarial (language) games

Heuristics fail when they have pressure put on them. As we have discussed, heuristics work well in a central set of situations, and then fail as we diverge from typicality. In strategy games, players are therefore incentivized to push the distribution of events towards those which are more atypical for opponents, so that their tactics (heuristics) will be least effective. If a fencer is strongest defensively, then force him onto the offensive. If America has zero nuclear icebreakers (because it has invested military resources optimizing different types of problems…), and Russia has half a dozen, then Russia should be aggressive pushing strategies and situations where a lack of nuclear icebreakers is a serious handicap.1

Conceptual analysis predictably failed to derive satisfactory, robust definitions of concepts because it put a heuristic (the concept) under adversarial pressure. Philosophers could always imagine some scenario, some situation, in which a posited definition, or set of conditions, failed to hold.

Successfully working with and around heuristics is, rather, a deeply cooperative practice. Miller’s Law for communication: First assume it’s true; then figure out how. Because heuristics are fitted to frames—for instance, there is a relationship between an agent’s goals and his utterances, or his diagnosis of the present interactive game and his linguistic tactics—interactants can use their interlocutors’ deployed heuristics—which appear to them in the form of surrogates—in order to model higher-order intentionality (“project”) and understanding (“model”). Then this higher-order understanding of project and model can be used to disambiguate and make meaningful further of the interlocutors’ deployed heuristics (observed surrogates).

This encourages well-roundedness. Another word for well-roundedness may be diversity. Well-roundedness can be contrasted with optimization. A related strategy to well-roundedness is empowerment. If the well-rounded RPG player has a balanced distribution of skill points committed to different abilities, the highly empowered player has stockpiled skill points without yet investing them—waiting until a critical situation arises, and only then spending them in precisely the ability the situation calls for.↩︎