Boing! Or; utility is not a function

This article was originally published in a dream I had, where it was titled simply “Boing!” The current title is chosen as a compromise between legibility and an appreciation of the original work.

Let’s say that you’re Hazard trying to jump as high as possible on an indoor trampoline. If you wanted to, you could conceptualize your jumping as a function. It takes in an input force and it gives you an output height. Then you could, as Hazard did1, imagine that you are not “jumping as high as possible” but instead “optimizing your jump function”.

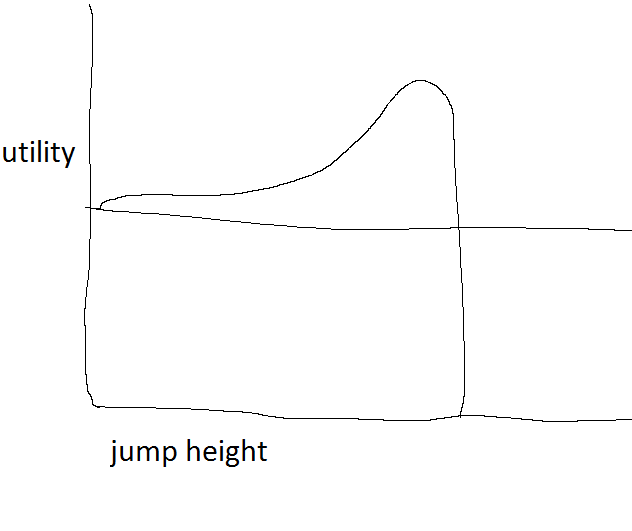

This becomes a problem when you remember the trampoline is indoors. If Hazard jumps too high, he’ll bonk his head! Is that what he wants? Well, no; he just never thought about it. So fine, he’s not optimizing his jump function, he’s optimizing his utility function for jumps. It goes up as the jumps get higher and higher, but craters into negative utility if he hits his head:

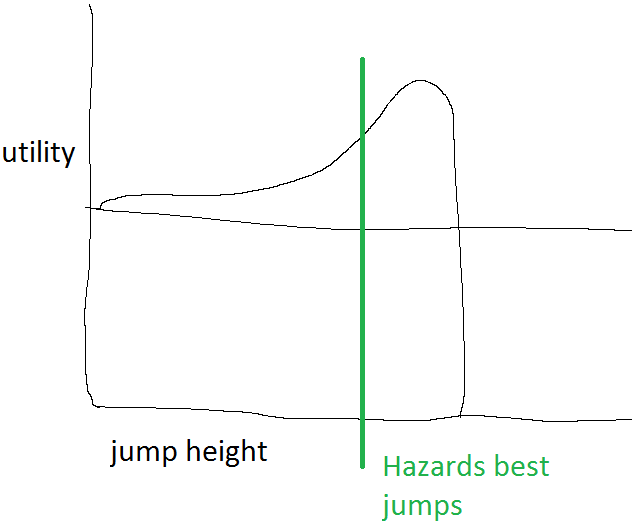

This is allowed in the technical sense of how functions work. You can pick a different output for every input point, and “optimizing” can mean finding the highest point in the output, even if it doesn’t come from an extreme value of input. But then why did Hazard think that what he was doing was “optimizing a jump function”? Because his ceiling is pretty high and he doesn’t have rocket legs, so by just doing a regular jump, he’s not in serious danger of hitting his head.

If he was going to try to REALLY “optimize his jump function”, he could try to make a small explosion happen under his trampoline, or jury-rig a homemade jetpack, or all sorts of other things. And then he’d bump his head and say “wah!” and not actually be happy. “Optimize my jump function” for Hazard actually ended up meaning “Try my hardest to jump high, but only the regular kinds of trying, not any insane horseshit.”

This is a pretty common pattern of human behavior. Rather than trying to “fully explore” a fitness landscape to optimize the mystical “utility”, people will often just pick a frame that chops out the bad extreme values (no jetpacks!), even if it means not having the absolute most fun possible (notice that Hazard would get more utility out of a little jetpacking). Hazard isn’t walking around mathematically optimizing for the perfect jump; he’s creating a space where he can try his hardest at jumps without danger of overshooting, and then playing in that space.

Not all functions are uniformly increasing or decreasing, but when we find value in conceptualizing patterns as functions, it’s often because of those straightforward types of relationships. The more that the landscape is full of critical points, sudden switches, and other weirdness, the more we try to carve out a space within the landscape where we can follow simpler rules. To the extent that “utility” is an attempt to explain why agents actually do the things that they do (and what else could it mean?), we shouldn’t think of it as a single numeric value to be optimized. You need to consider the rules we follow when considering which things can even be thought of as functions. And while you could try to “compile” those rules into a master “utility function”, that’s not how you generated those rules, that not how you follow them, and that’s not how you’ll maintain them over time. So what’s the point of conceiving of your behavior as a “utility function”, aside from getting to pretend to yourself that mathematical models of behavior might someday start working better than the abysmal record they’ve had so far?

To be clear, I am not recounting a thing that actually happened, I am attempting to accurately transcribe an article I wrote in the dream where this thing actually happened.↩︎